Apache Airflow

Disclaimer

Your use of this download is governed by Stonebranch’s Terms of Use.

Overview

Apache Airflow is an open source platform created to programmatically author, schedule, and monitor workflows.

This Universal Extension provides the capability to integrate with Apache Airflow and use it as part of your end-to-end Universal Controller workflow, allowing high-level visibility and orchestration of data-oriented jobs or pipelines.

Version Information

| Template Name | Extension Name | Extension Version |

|---|---|---|

| Apache Airflow | ue-airflow | 2.0.0 |

Version 2.0.0, does not support Universal Agent/Controller 7.0.0.0. Detailed Software Requirements below.

Software Requirements

This integration requires a Universal Agent and a Python runtime to execute the Universal Task.

Software Requirements for Universal Template and Universal Task

Requires Python 3.7.0 or higher. Tested with the Universal Agent bundled Python distribution.

Software Requirements for Universal Agent

Both Windows and Linux agents are supported.

Universal Agent for Windows x64 Version 7.1.0.0 and later with python options installed.

Universal Agent for Linux Version 7.1.0.0 and later with python options installed.

Software Requirements for Universal Controller

Universal Controller Version 7.1.0.0 and later.

Supported Apache Airflow Versions

This integration is tested on Apache airflow v2.2.3 and v2.2.5. It should be compatible with newer versions of Airflow as long as Airflow backward compatibility is preserved.

Key Features

This Universal Extension provides the following key features:

- Actions

- Trigger a DAG run and optionally wait until the DAG was reached "success" or "failure".

- Information retrieval of a specific DAG Run.

- Information retrieval for a task that is part of a specific DAG Run.

- Authentication

- Basic authentication for Stand Alone Airflow Server.

- Service Account Private Key for Google Cloud Composer.

- Other

- Capability to use HTTP or HTTPS proxy instead of direct communication to Stand Alone Airflow Server.

Import Universal Template

To use the Universal Template, you first must perform the following steps.

This Universal Task requires the Resolvable Credentials feature. Check that the Resolvable Credentials Permitted system property has been set to true.

To import the Universal Template into your Controller, follow the instructions here.

When the files have been imported successfully, refresh the Universal Templates list; the Universal Template will appear on the list.

Modifications of this integration, applied by users or customers, before or after import, might affect the supportability of this integration. For more information refer to Integration Modifications.

Configure Universal Task

For a new Universal Task, create a new task, and enter the required input fields.

Input Fields

The input fields for this Universal Extension are described in the following table.

| Field | Input type | Default value | Type | Description |

|---|---|---|---|---|

| Connect To Introduced in version 2.0.0 | Required | Standalone Airflow Server | Choice | Airflow Service Provider.

|

| Action | Required | Trigger DAG Run | Choice | The action performed upon the task execution. Supported options are the following.

|

| Airflow Base URL | Required | - | Text | The Base URL of the Airflow server. |

| Airflow Credentials Optional since version 2.0.0 | - | - | Credentials | The Apache Airflow account credentials. The Credentials definition should be as follows.

|

| Credentials Type Introduced in version 2.0.0 | - | Service Account Private Key | Choice | The authentication method for Google Cloud Composer.

|

| Service Account Key Introduced in version 2.0.0 | Optional | - | Credentials | The Credentials definition should be as follows.

|

| DAG ID | Required | - | Dynamic Choice | Dynamic Choice field populated by getting a list of active DAG’s from the server. |

| Configuration Parameters (JSON) Introduced in version 2.0.0 | Optional | - | Large Text Field | Configuration parameters, mapped to "conf" payload attribute of Airflow /dags/{dag_id}/dagRuns API. It should be in JSON format. Optional for Action "Trigger DAG Run". |

| DAG Run ID | Optional | - | Text | ID of a specific DAG Run. Required for Action "Read DAG Run Information" / "Read Task Instance Information". |

| Task ID | Optional | - | Text | Dynamic Choice field populated by getting a list of Task IDs for a specific DAG ID. Required for Action "Read Task Instance Information." |

| SSL Options | Required | False | Boolean | Specifies if SSL protocol should be used for the communication with the foreign API. Optional for Connect To= "Standalone Airflow Server". |

| CA Bundle Path | Optional | - | Text | Path and file name of the trusted certificate or CA bundle to use in certificate verification. The file must be in PEM format.Required for Connect To= "Standalone Airflow Server" and SSL Options is checked. |

| SSL Certificate Verification | Optional | True | Boolean | Determines if the host name of the certificate should be verified against the hostname in the URL. Required for Connect To="Standalone Airflow Server" and SSL Options is checked. |

| Use Proxy | Required | False | Boolean | Flag to allow Proxy configuration to allow connection to Apache Airflow through Proxy. Optional for Connect To= "Standalone Airflow Server". |

| Proxy Servers | Optional | - | Text | Proxy server and port. Valid format. http://proxyserver:port or https://proxyserver:port.Required for Connect To="Standalone Airflow Server" and Use Proxy is checked. |

| Wait for success or Failure Introduced in version 1.1.0 | Required | False | Boolean | If selected, the task will continue running until DAG Run reaches the "success" or "failed" state. Required for Action "Trigger DAG Run". |

| Polling Interval Introduced in version 1.1.0 | Required | 1 | Integer | The polling interval in seconds between checking for the status of the DAG Run execution state. Required for Action "Trigger DAG Run". |

Cancellation and Re-Run

In the case of "Trigger DAG Run" Action, the executing task is immediatelly populating output only field DAG Run Id asynchronously for information purposes and to keep the state of execution in case of "Cancel" and "Re-run". In case of "Re-run", the executing task checks if the DAG Run ID output field is populated and in the case it is not, it will trigger the DAG run.

Canceling the task execution for Action "Trigger DAG Run" before the DAG Run ID output only field is populated, can lead to undesirable behavior on task "Re-run".

Task Examples

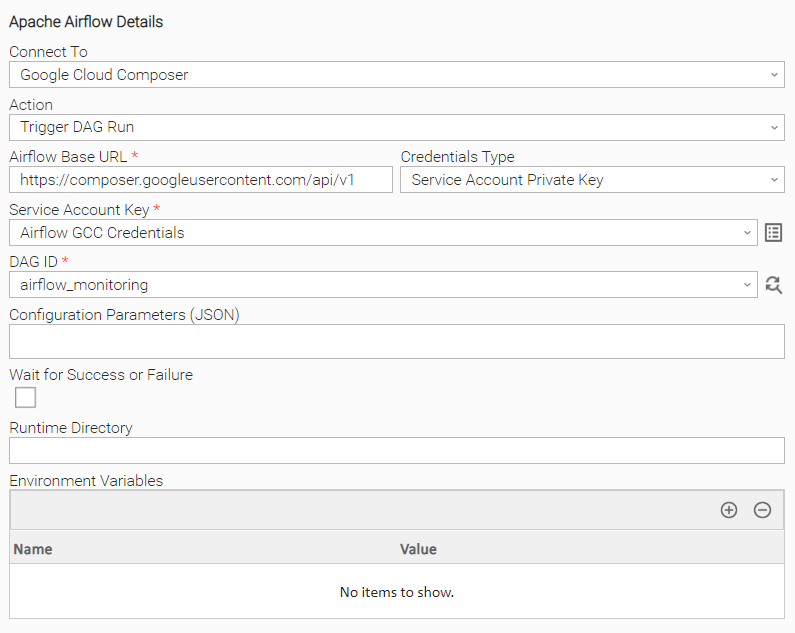

Trigger DAG Run (GCC)

Example of Universal Task for triggering a new DAG Run with Google Cloud Composer .

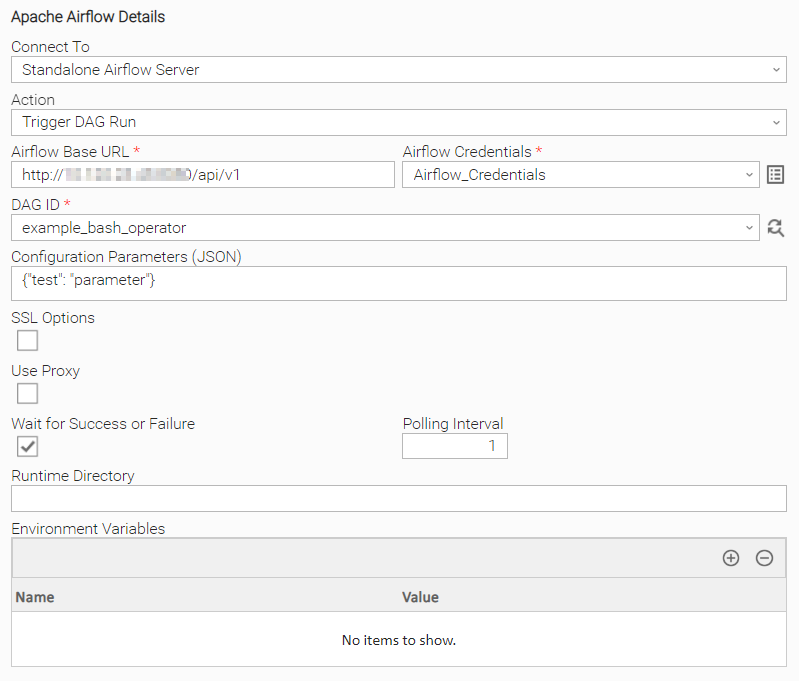

Trigger DAG Run (Standalone)

Example of Universal Task for triggering a new DAG Run on Standalone Airflow Server and waiting for "success" or "failure" state as result. Configurations Parameters are also used.

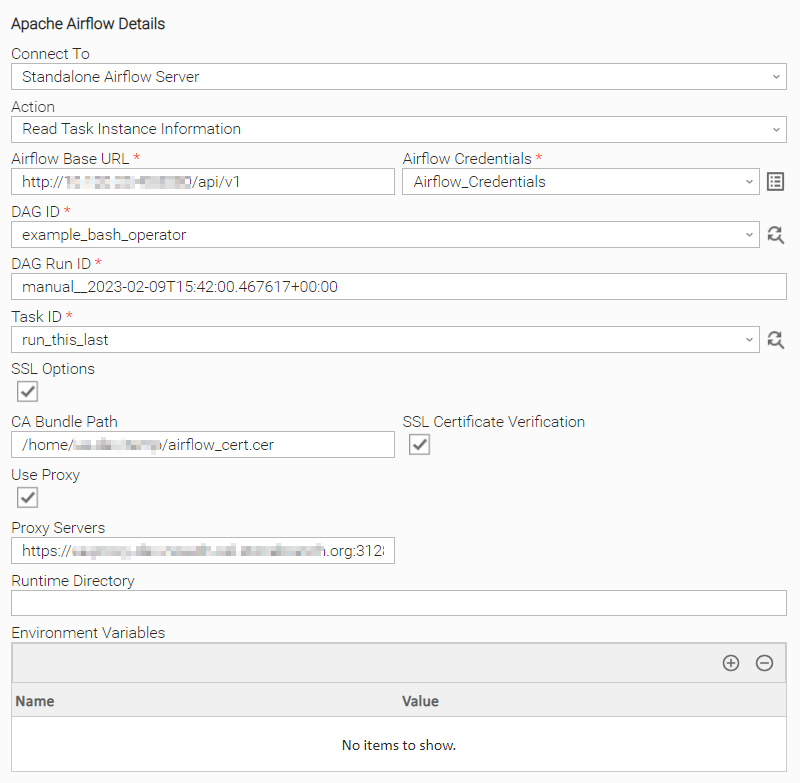

Read Airflow Task Instance Information

Example of Universal Task for getting information on Standalone Airflow Task instance. HTTPS Proxy , CA Bundle , SSL Verification are used.

Task Output

Output Only Fields

The output fields for this Universal Extension are described below.

| Field | Type | Description |

|---|---|---|

| DAG Run Id | Text | The field is populated when Action is one of the following.

|

| DAG Run State | Text | The field represents the state of the DAG Run and it is populated when Action is one of the following.

The available values are

|

Exit Codes

The exit codes for this Universal Extension are described in the following table.

| Exit Code | Status Classification Code | Status Classification Description | Status Description |

|---|---|---|---|

| 0 | SUCCESS | Successful Execution | SUCCESS: Successful Task Execution |

| 1 | FAIL | Failed Execution | FAIL: <Error Description> |

| 2 | AUTHENTICATION_ERROR | Authentication Error | AUTHENTICATION_ERROR: <Error Description> |

| 3 | CONNECTION_ERROR | Connection Error | CONNECTION_ERROR: <Error Description> |

| 20 | DATA_VALIDATION_ERROR | Input fields Validation Error | DATA_VALIDATION_ERROR: <Error Description> |

| 21 | REQUEST_FAILURE | HTTP request error | REQUEST_FAILED: <Error Description> |

| 22 | FAIL | Failed Execution | FAIL: DAG Run was triggered, but the status of DAG Run is 'Failed'. |

Extension Output

In the context of a workflow, subsequent tasks can rely on the information provided by this integration as Extension Output.

The Extension output contains Attribute result. The result Attribute, as displayed above, is based on the response of the related Airflow REST APIs for the respective actions and version 2.2.3 or 2.2.5. Other versions of Airflow may produce different information as part of the result attribute.

Attribute changed is populated as follows.

- true, in case when a DAG Run is successfully triggered.

- false, in case when DAG Run is not triggered or the Action is "Read DAG Run Information" or "Read Task Instance Information".

result section includes attributes as described in Airflow API Official documentation.

The Extension Output for this Universal Extension is in JSON format as described below.

For Action

- Trigger DAG Run

- Read DAG Run Information

The Extension Output below refers to Trigger DAG Run (Standalone) task example.

{

"exit_code": 22,

"status_description": "FAIL: DAG Run was triggered, but the status of DAG Run is 'Failed'.",

"changed": true,

"invocation": {

"extension": "ue-airflow",

"version": "2.0.0",

"fields": {

"connect_to": "standalone_airflow_server",

"credentials_type_google": null,

"service_account_key": null,

"base_url": "http://airlfow_url:8080/api/v1",

"credentials_user": "****",

"credentials_password": "****",

"use_ssl": false,

"ssl_verify": false,

"trusted_certificate_file": null,

"ssl_hostname_check": false,

"private_key_certificate": null,

"public_key_certificate": null,

"use_proxy": false,

"proxies": null,

"action": "trigger_dag_run",

"dag_id": "example_bash_operator",

"dag_run_id": "manual__2023-02-09T20:20:39.820239+00:00",

"task_id": null,

"wait_for_success_or_failure": true,

"polling_interval": 1,

"configuration_parameters": {

"conf": {

"test": "parameter"

}

},

"dag_run_id_output": null

}

},

"result": {

"conf": {

"test": "parameter"

},

"dag_id": "example_bash_operator",

"dag_run_id": "manual__2023-02-09T20:20:39.820239+00:00",

"end_date": "2023-02-09T20:20:54.717256+00:00",

"execution_date": "2023-02-09T20:20:39.820239+00:00",

"external_trigger": true,

"logical_date": "2023-02-09T20:20:39.820239+00:00",

"start_date": "2023-02-09T20:20:40.022415+00:00",

"state": "failed"

}

}For Action- Read Task Instance Information

The Extension Output below refers to Read Airflow Task Instance information task example.

{

"exit_code": 0,

"status_description": "SUCCESS: Successful Task Execution!",

"changed": false,

"invocation": {

"extension": "ue-airflow",

"version": "2.0.0",

"fields": {

"connect_to": "standalone_airflow_server",

"credentials_type_google": null,

"service_account_key": null,

"base_url": "http://airflow_url:8080/api/v1",

"credentials_user": "****",

"credentials_password": "****",

"use_ssl": true,

"ssl_verify": true,

"trusted_certificate_file": "****",

"ssl_hostname_check": false,

"private_key_certificate": null,

"public_key_certificate": null,

"use_proxy": true,

"proxies": "https://ue-proxy-dev-noauth-ssl.stonebranch.org:3128",

"action": "get_task_instance",

"dag_id": "example_bash_operator",

"dag_run_id": "manual__2023-02-09T15:42:00.467617+00:00",

"task_id": "run_this_last",

"wait_for_success_or_failure": false,

"polling_interval": 1,

"configuration_parameters": {

"conf": {}

},

"dag_run_id_output": null

}

},

"result": {

"dag_id": "example_bash_operator",

"duration": 0.0,

"end_date": "2023-01-10T09:26:55.661975+00:00",

"execution_date": "2023-01-09T14:16:48.856712+00:00",

"executor_config": "{}",

"hostname": "",

"max_tries": 0,

"operator": "DummyOperator",

"pid": null,

"pool": "default_pool",

"pool_slots": 1,

"priority_weight": 1,

"queue": "default",

"queued_when": null,

"sla_miss": null,

"start_date": "2023-01-10T09:26:55.661975+00:00",

"state": "upstream_failed",

"task_id": "run_this_last",

"try_number": 0,

"unixname": "****"

}

}

STDOUT and STDERR

STDOUT and STDERR provide additional information to User. The populated content can be changed in future versions of this extension without notice. Backward compatibility is not guaranteed.

Integration Modifications

Modifications applied by users or customers, before or after import, might affect the supportability of this integration. The following modifications are discouraged to retain the support level as applied for this integration.

- Python code modifications should not be done.

- Template Modifications

- General Section

- "Name", "Extension", "Variable Prefix", "Icon" should not be changed.

- Universal Template Details Section

- "Template Type", "Agent Type", "Send Extension Variables", "Always Cancel on Force Finish" should not be changed.

- Result Processing Defaults Section

- Success and Failure Exit codes should not be changed.

- Success and Failure Output processing should not be changed.

- Fields Restriction Section

The setup of the template does not impose any restrictions, However with respect to "Exit Code Processing Fields" section.- Success/Failure exit codes need to be respected.

- In principle, as STDERR and STDOUT outputs can change in follow-up releases of this integration, they should not be considered as a reliable source for determining success or failure of a task.

- General Section

Users and customers are encouraged to report defects, or feature requests at Stonebranch Support Desk.

Document References

This document references the following documents.

| Document Link | Description |

|---|---|

| Universal Templates | User documentation for creating, working with and understanding Universal Templates and Integrations. |

| Universal Tasks | User documentation for creating Universal Tasks in the Universal Controller user interface. |

| Credentials | User documentation for creating and working with credentials. |

| Resolvable Credentials Permitted Property | User documentation for Resolvable Credentials Permitted Property. |

| Apache Airflow Documentation | User documentation for Apache Airflow. |

| Apache Airflow API Documentation | User Documentation for Airflow REST API. |

| Google Cloud Composer Airflow | User Documentation for Google Cloud Composer. |

Changelog

ue-airflow-2.0.0 (2023-02-24)

Enhancements

Added: Support for Google Cloud Composer AirflowAdded: Support for passing JSON configuration parameters on Action "Trigger Dag Run"

Deprecations and Breaking Changes

Breaking Change: Stop supporting Universal Agent/Controller 7.0.0.0. Support Universal Agent/Controller 7.1.0 or higher.

ue-airflow-1.1.0 (2022-06-10)

Enhancements

Added: Support for Trigger a DAG run and wait for state "success" or "failed".

ue-airflow-1.0.0 (2022-03-03)

- Initial version.